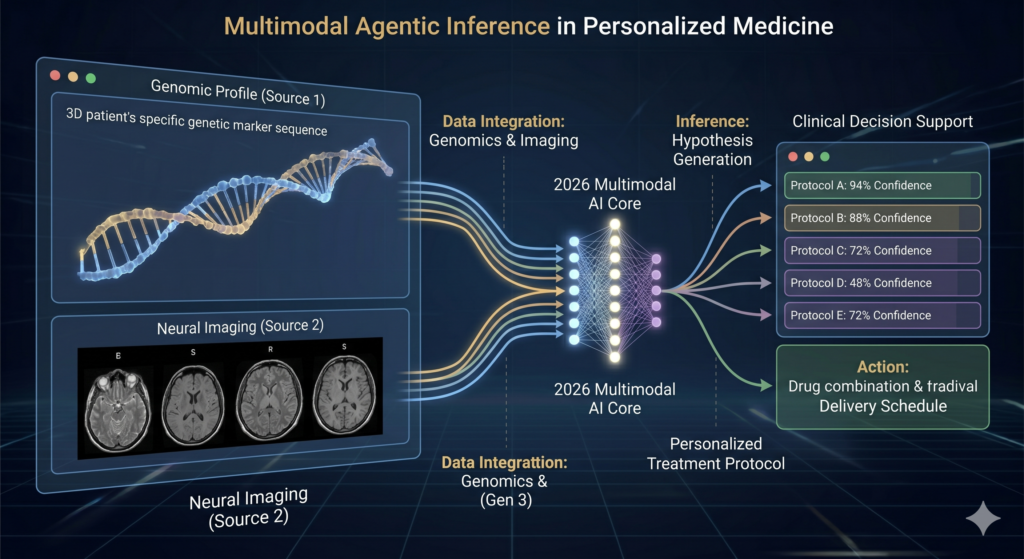

The digital landscape of 2026 is no longer defined by simple automation; it is driven by sophisticated reasoning. At the heart of every autonomous vehicle, personalized medical diagnostic tool, and hyper-efficient supply chain lies a complex AI Algorithm. Understanding how these digital “brains” operate is no longer just for data scientists—it is essential knowledge for any professional navigating the modern economy.

In this comprehensive guide, we will dissect the layers of modern machine intelligence, exploring how an algorithm works at a fundamental level and decoding the mystery of how AI thinks in an era of agentic workflows and multimodal reasoning.

What is an AI Algorithm? Defining the Modern Neural Framework

To understand the current state of technology, we must first define the AI Algorithm. Unlike traditional software that follows rigid “if-then” logic, a modern AI algorithm is a dynamic mathematical structure designed to identify patterns, make predictions, and improve its own performance over time through exposure to data.

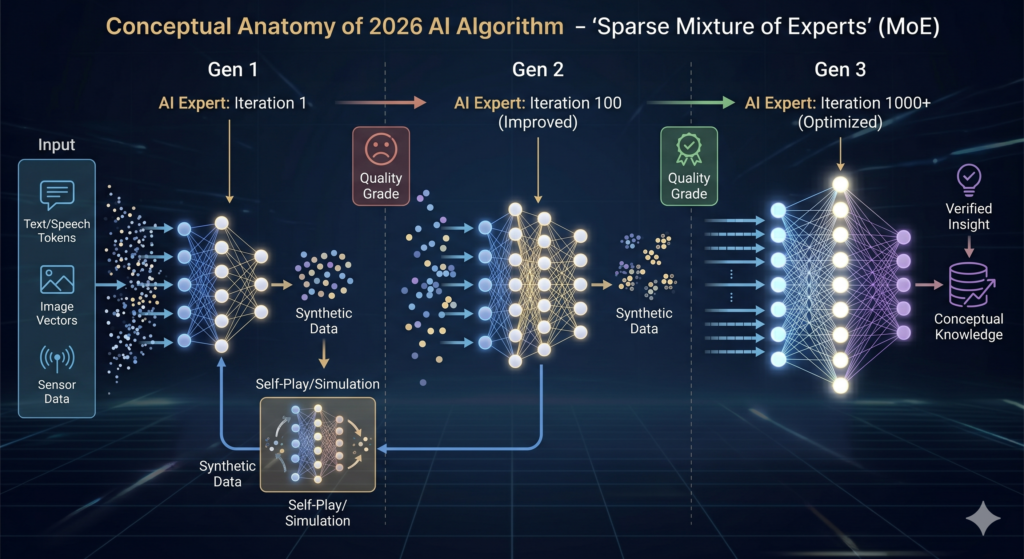

By 2026, the industry has shifted from monolithic models to “Sparse Mixture of Experts” (MoE) architectures. This means that instead of one giant brain trying to do everything, an AI Algorithm today is often a collection of specialized sub-networks that activate only when their specific expertise is required.

The Shift from Static Code to Fluid Learning

Traditional algorithms are like recipes: follow steps A, B, and C to get result D. Modern AI, however, is more like a student. It is given a goal and a massive dataset, and it must “learn” the best path to reach that goal. This transition from programmed logic to learned behavior is the foundation of how AI thinks today.

How an AI Algorithm Works: From Input to Insight

The journey of data through a neural network is a marvel of mathematical engineering. To grasp how an algorithm works, we need to look at the three primary stages of the process: Input, Processing, and Output.

1. The Input Layer and Tokenization

Everything an AI consumes—text, images, audio, or sensory data from a robot—must be converted into numbers. This process is called tokenization and embedding.

- Text: Words are broken into tokens and mapped into a high-dimensional vector space.

- Images: Pixels are analyzed for gradients, edges, and textures.

- Contextual Weighting: In 2026, models use “Global Attention” to understand how a single piece of data relates to every other piece of data in a massive set simultaneously.

2. The Hidden Layers: The Engine of Inference

The “thinking” happens in the hidden layers. This is where the AI Algorithm applies weights and biases to the input data. Each layer extracts increasingly complex features. For example, in facial recognition:

- Layer 1 identifies edges.

- Layer 2 identifies shapes (circles, lines).

- Layer 3 identifies features (eyes, noses).

- Final Layers identify the specific person.

3. The Output Layer: Decision and Probability

The final stage isn’t a “guess”; it is a statistical probability. Whether the AI is generating a line of code or identifying a tumor in a scan, it is selecting the outcome with the highest mathematical confidence based on its training.

How AI Thinks: Decoding Machine Reasoning in 2026

The phrase how AI thinks is often used metaphorically, but in 2026, “System 2 Reasoning” has made this metaphor a reality. Early AI (System 1) was impulsive and focused on pattern matching. Modern AI utilizes Chain-of-Thought (CoT) processing to “think” before it speaks.

Predictive Processing and Internal Monologues

When you ask a modern AI a complex question, it doesn’t just predict the next word. It generates an internal plan. It breaks the problem into sub-tasks, verifies its own logic, and iterates on its answer before presenting it to the user. This “deliberative” phase is a hallmark of how the AI Algorithm has evolved.

The Mathematics of “Thinking”

At its core, the “thought” process is an optimization problem. The goal is to minimize the “Loss Function”—the difference between the AI’s prediction and the actual truth. This is calculated using the gradient descent formula:

$$\theta_{t+1} = \theta_t – \eta \cdot \nabla J(\theta_t)$$

In this equation:

- $\theta$ represents the model’s weights.

- $\eta$ is the learning rate.

- $\nabla J(\theta)$ is the gradient of the loss function.

Takeaway: When we ask how AI thinks, we are really asking how it minimizes its margin of error across billions of parameters simultaneously.

The Learning Cycle: How AI Evolves Over Time

An AI Algorithm is never truly “finished.” It is in a constant state of refinement. In 2026, the learning process has moved beyond simple supervised learning into more autonomous realms.

Reinforcement Learning from Human Feedback (RLHF) 2.0

While early models relied heavily on humans labeling data, the 2026 AI Algorithm uses advanced RLHF where the AI learns from subtle human cues and complex preferences. This allows the AI to align its “thinking” with human ethics and nuances.

Self-Play and Synthetic Data

One of the biggest breakthroughs in how an algorithm works today is self-learning. AI models now engage in “self-play,” where two versions of the same algorithm compete or collaborate to solve problems, generating their own “synthetic data” to learn from. This has solved the data scarcity crisis of the early 2020s.

5 Key Components of a Modern AI Algorithm

To summarize the anatomy of these systems, we can break them down into five essential components:

- Architecture: The skeletal structure (e.g., Transformers, State Space Models).

- Parameters: The “synapses” of the AI—often numbering in the trillions in 2026.

- Data Corpus: The diverse, multimodal knowledge base the AI was trained on.

- Inference Engine: The part of the software that runs the “thinking” process in real-time.

- Alignment Layer: The safety protocols that ensure the AI’s outputs are helpful and harmless.

People Also Ask

How does an AI algorithm learn from new information?

In 2026, an AI Algorithm learns through “fine-tuning” or “In-Context Learning.” Fine-tuning updates the model’s internal weights using new datasets, while In-Context Learning allows the AI to use information provided in a specific prompt to adjust its reasoning without changing its permanent structure.

Is AI “thinking” the same as human thinking?

No. While we use terms like how AI thinks, the processes are fundamentally different. Human thinking is biological, emotional, and context-dependent. AI “thinking” is a massive-scale statistical inference process driven by high-dimensional mathematics. However, the results are increasingly indistinguishable in terms of logical output.

Why do AI algorithms make mistakes (hallucinations)?

Hallucinations occur when the AI Algorithm prioritizes grammatical or structural probability over factual accuracy. In 2026, this is mitigated by “Retrieval-Augmented Generation” (RAG), which forces the AI to check its “thoughts” against a verified external database before answering.

The Future of the AI Algorithm: What’s Next?

As we look toward the late 2020s, the anatomy of the AI Algorithm is becoming increasingly “agentic.” We are moving away from AI that simply answers questions toward AI that takes action. Understanding how an algorithm works today is the first step in mastering the tools of tomorrow.

The models of 2026 are more efficient, more logical, and more integrated into our daily lives than ever before. By mastering the concepts of how AI thinks, businesses and individuals can better leverage these systems to solve the world’s most pressing challenges.